NixOS k8s

Created by Jaka Hudoklin / @offlinehacker

About me?

Fullstack software engeneer in javascript, python, c, nix and more, with experiences in web technologies, system provisioning, embedded devices and security.

Projects

http://gatehub.net: new fintech platform for multy currency payments, trading and exchange based on ripple

Data driven distributed task automation and data agregation framework using graph databases and docker containers and nix.

Question is how can we scale (nix) applications in production?

How to deploy:

- ... scalabe nixos systems?

- ... scalabe apps on top of nixos?

- ... scalable and reliable distributed storage?

- ... scalable monitoring system?

What do we need?

- Cluster process manager

- Secure distributed overlay networking

- Load balancer

- Distributed and replicated storage

- Scheduler for resources like storage, processing power, networking, ...

- Cluster manager

- Monitoring system

(Container) service managers

- systemd (nspawn)

- docker

- rocket

- lxc

Overlay networking

- openvswitch

- wave

- flanner

- libnetwork

Distributed and replicated storage

- ceph

- glusterfs

- xtremefs

- In cloud reliable storage like amazon ebs

Avaliable container cluster managers

- google kubernetes

- coreos fleet

- docker swarm

- dokku

- rancher

Many more avalible here: http://stackoverflow.com/questions/18285212/how-to-scale-docker-containers-in-production

Docker and nix

- Primarily used for running applications, not whole distros

- Running nix inside docker is easy

- A few benefits compared to other docker images, you can pick exact versions of packages you want to run.

$ cat Dockerfile

FROM nixos/nix:1.10

RUN nix-channel --add http://nixos.org/releases/nixpkgs/nixpkgs-16.03pre71923.3087ef3/ dev

RUN nix-channel --update

RUN nix-env -iA dev.nginx

ADD nginx.conf nginx.conf

CMD nginx -c $PWD/nginx.conf -g 'daemon off;'

$ docker build -t offlinehacker/nginx .

$ docker run -ti -p 80:80 offlinehacker/nginxWhat about running nixos in docker?

You can run nixos inside docker containers using --privileged mode

... but, you don't want to do that

Working implementation of service abstraction layer is in progress, but currently not on my priority list

Kubernetes project

Distributed cluster manager for docker containers

- First announced and opensourced in 2014 by google

- Influenced by Google's Borg system

- Written in go

- Uses docker as primary process manager

- Support for rocket

- ~20.000 commits, 555 contributors

- Currently stable release 1.1.1

Provides:

- Replication

- Load balancing

- Integration with distributed storage (ebs, ceph, glusterfs, nfs, ...)

- Resource quotas

- Logging and monitoring

- Declarative configuration

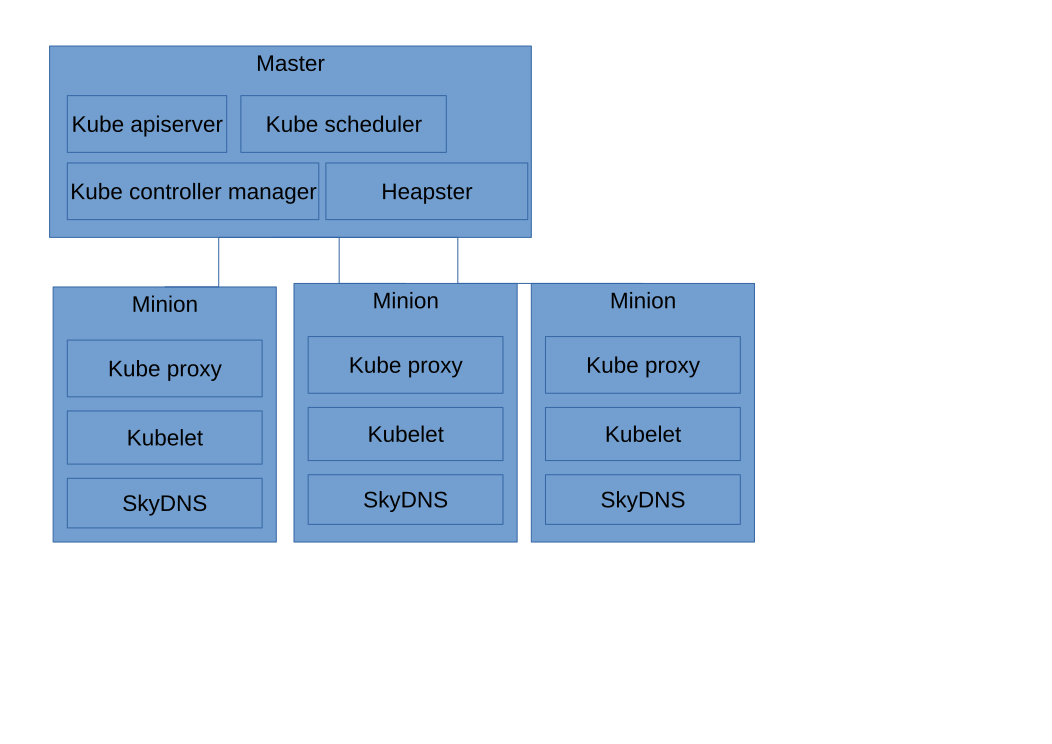

Separation of components into microservices:

- kube-apisever: http api service

- kube-controller-manager: managment of resource

- kube-scheduler: scheduling of resources like servers and storage

- kube-proxy: load balancing proxy

- kubelet: container managment and reporting on minions

Kubernetes terms:

- Namespace: a separate group of pods, replication controllers and services

- Minon: a worker node

- Pod: a group of containers running on the same node

- Replication controller: A controller for a group of pods

- Service: Internal load balancer for a group of pods

Demo

Create replication controller

$ cat nginx-controller.yaml

apiVersion: v1

kind: ReplicationController

metadata:

name: nginx-controller

spec:

replicas: 2

# selector identifies the set of Pods that this

# replication controller is responsible for managing

selector:

app: nginx

# podTemplate defines the 'cookie cutter' used for creating

# new pods when necessary

template:

metadata:

labels:

# Important: these labels need to match the selector above

# The api server enforces this constraint.

app: nginx

spec:

containers:

- name: nginx

image: offlinehacker/nginx

ports:

- containerPort: 80

$ kubectl create -f nginx-controller.yaml

Expose created pods using load balancer

$ cat nginx-service.yaml

apiVersion: v1

kind: Service

metadata:

name: nginx-service

spec:

ports:

- port: 8000 # the port that this service should serve on

# the container on each pod to connect to, can be a name

# (e.g. 'www') or a number (e.g. 80)

targetPort: 80

protocol: TCP

# just like the selector in the replication controller,

# but this time it identifies the set of pods to load balance

# traffic to.

selector:

app: nginx

$ kubectl create -f nginx-service.yaml

Some additional commands

$ kubectl get nodes

$ kubectl get pods

$ kubectl logs nginx-controller-c5sik

$ kubectl exec -t -i -p nginx-controller-c5sik -c nginx -- shRunning kubernetes on nixos

There is a nixos module created and maintained by me

It's deployed in production for longer period of time, till now without any bigger issues

A few lines of nixos config

bridges = {

cbr0.interfaces = [ ];

};

interface = {

cbr0 = {

ipAddress = "10.10.0.1";

prefixLength = 24;

};

};

services.kubernetes.roles = ["master" "node"];

virtualisation.docker.extraOptions =

''--iptables=false --ip-masq=false -b cbr0'';

This enables kubernetes with all the services you need for single node kubernetes

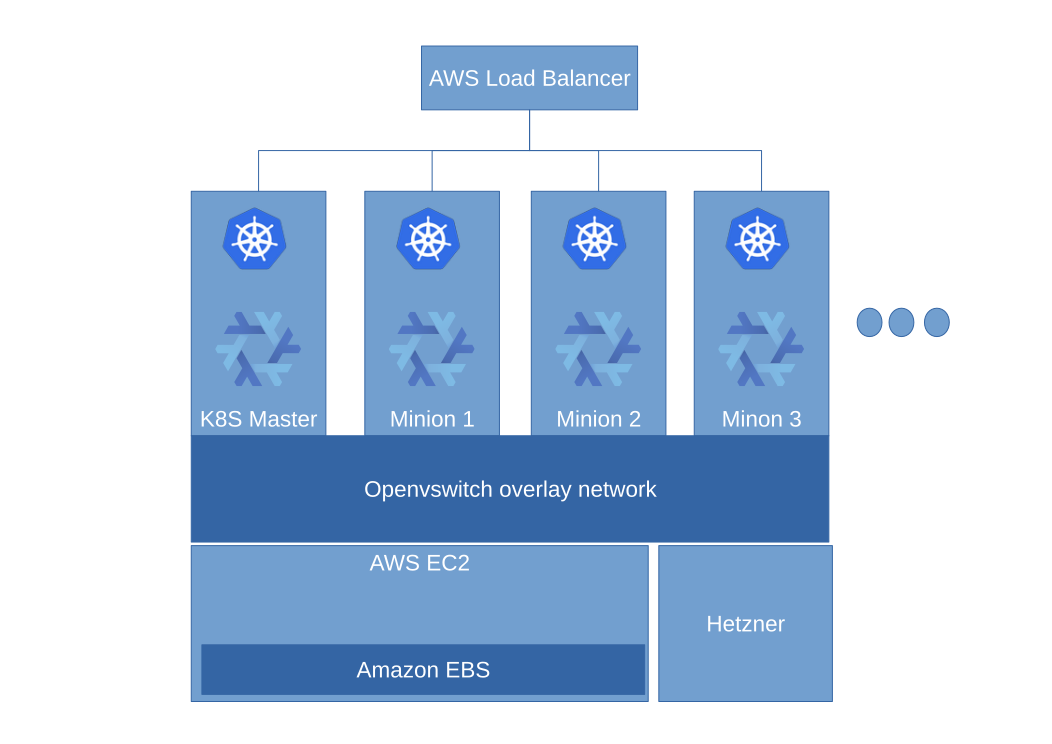

Running kubernetes in production

For production environments we need at least a cluster of three machines of which one of the machines is master

All machines need to be connected and have routable subnets

How we do it?

- Several aws instances in VPC

- Deployment with nixops

- Overlay ipsec networking with openvswitch

- EBS as storage

- Separate production and development namespaces

Monitoring

- Collectd for metrics agregation

- Influxdb for metrics storage

- Grafana as dashboard for metrics vizualizations

- Bosun for alerting

- Heapster for kubernetes monitoring

- Grafana and kibana for agregation and vizualization of logs

Configuration?

I have developed a set of reusable nixos profiles that you can simply include in your configuration. They are avalible on https://github.com/offlinehacker/x-truder.net

... they still need a better documentationEOF

My social media and sites:

- github: github.com/offlinehacker

- twitter: @offlinehacker

- irc: offlinehacker@freenode

- www: http://www.jakahudoklin.com